Revaluing the Real

What is a simulation? The once feared, now de-fanged and nearly forgotten Jean Baudrillard wrote in Simulacra and Simulation that “To simulate is to feign to have what one doesn’t have.” That makes simulations a subspecies of lie, in other words, even if they are occasionally useful ones. Emergency crews and the military require simulations in order to keep their edge. A practice test can also be a kind of useful simulation. But we can just as easily imagine scenarios in which simulations are put to less practical ends, or achieve morally ambiguous results. The worst example of which might be confusing the simulation for reality, or “the map for the territory,” as the saying goes. Of course, philosophers such as Immanuel Kant, Graham Harman, and perhaps even Plato, might argue that even the sensory information we receive moment to moment is, in its own way, a simulation of a much more profound reality just beyond the grasp of the immediate. As the gnostic sci-fi writer Philip K. Dick warned in The Dark-Haired Girl, “This is a cardboard universe, and if you lean too long or too heavily against it, you fall through.” But into what?

Whether or not we require simulation in order to experience reality, and just how much truth might exist within simulation itself, are perennial philosophical snares. Sherry Turkle avoids them altogether by grounding her thought in the tradition of Francis Bacon, the 17th century English philosopher and statesman who is commonly recognized as the father of empiricism. His phrase, which haunts Simulation and Its Discontents like a presiding spirit, is “Scientia est potentia,” or knowledge is power. Although not mentioned by Turkle, the following quote from Bacon’s Novum Organum eloquently demarcates the contours of her inquiry:

Human knowledge and human power meet in one; for where the cause is not known the effect cannot be produced. Nature to be commanded must be obeyed; and that which in contemplation is as the cause is in operation as the rule.

In other words, by using nature to achieve material ends, we understand nature better. This is a book which takes as its starting point not ontology or epistemological truth claims, but use value. In doing so, it accurately reflects the modern preference for praxis over philosophy and the transformation of homo sapiens into homo faber. Simulation and Its Discontents is less a philosophical tract than a search for professional best practices. As such, it’s a useful artifact for understanding how the people who change our world the most directly—scientists and engineers——think about their tools and their goals.

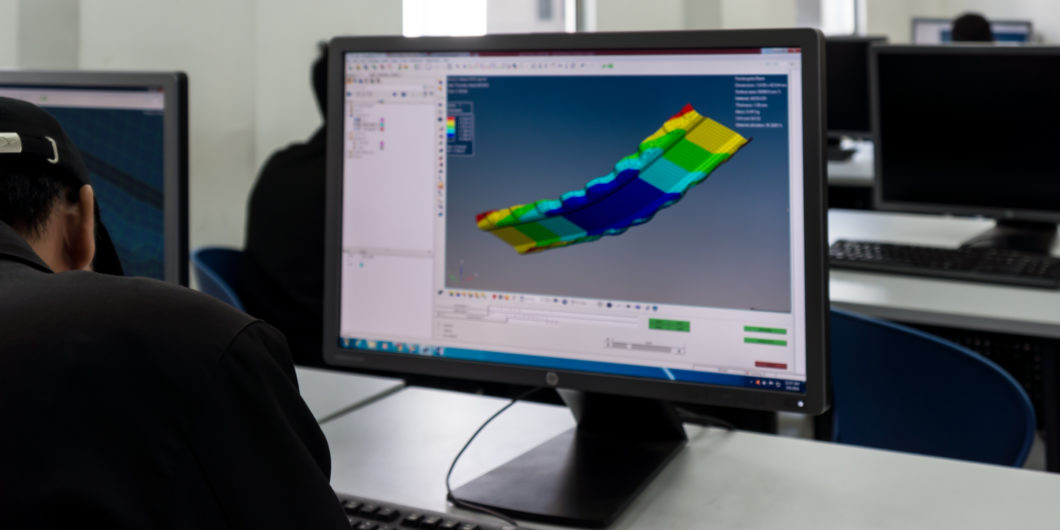

The “simulation” in Simulation and Its Discontents refers primarily to the use of computer applications developed within the past thirty years to help scientists and architects do their work. Turkle herself considers these simulations a niche kind of tool, and a tool in the most simple and common sense sort of way: a thing you use in order to solve a much more complicated problem. There’s a pragmatism to this. In eschewing philosophical depth, Turkle is able to focus on the granular details of how these computer programs are implemented. Abdicating any pretensions to think through the thornier problems of simulation, the book almost becomes an oral history of the implementation of computers at MIT beginning in the 1980’s. But Sherry Turkle’s occasional gestures at analysis keep her from becoming a tech-world Studs Terkel.

In 1983, MIT launched “Project Athena,” in which “[a]cross all disciplines, faculty were encouraged, equipped, and funded to write educational software to use in their classes.” Turkle reminds us that, at the time, widespread use of personal computers wasn’t something that was assumed to be inevitable. The history of Athena “brings us back to a moment when educators spoke as though they had a choice about using computers in the training of designers and scientists.” Of course, they didn’t. It was going to happen whether they wanted it to or not. “From the time it was introduced,” Turkle writes, “simulation was taken as the wave of the future.” Architects were meant to design on the computer. Chemists to model molecules. Physicists to solve complex mathematical problems. Any complaints people might have had were tossed into the waste bin of history.

And people did have complaints. Turkle describes light pushback, generally coming from older professors, and typically covering three basic issues: the skills students lose when not doing work by hand, the lack of transparency in software code, and the way in which mandating computer use leads to rigidity in roles. The first complaint is the simplest and most predictable. And the second seems straightforward as well—if the numbers being generated in an experiment depend upon software, then you need to understand how that software works or you can’t explain your results. These concerns overlap. As Turkle writes, “For architects, drawing was the sacred space that needed to be protected from the computer. The transparency of drawing gave designers confidence that they could retrace their steps and defend their decisions.”

But the sort of “transparency” the reader is most familiar with has to do with access to coding language. Manufacturers have an interest in keeping their code proprietary, protected in a “black box,” and we’ve come to define “transparency” based solely on our need to interact directly with that code. Interestingly enough, Turkle tells us, “…today’s professionals have watched the meaning of the word transparency change in their lifetime.” In the early 80’s, command lines on your computer screen reminded you of the code you were working with. With the advent of the Apple Macintosh personal computer in 1984 and its graphical user interface with double-click icon access, “transparency” suddenly came to have an inverse meaning: “being able to use a program without knowing how it works.” Of course, this shift seems to have as much to do with economics and law as anything else, but here Turkle leaves us free to speculate.

Turkle delicately touches on and then passes over how scientific knowledge is used to organize society. James C. Scott’s classic Seeing Like A State is handy supplemental reading here. In his classic study, Scott articulates a criticism of what he calls “high modernism,” or the technocratic ability to control and shape society through scientific prowess. Often, this can be as simple as standardizing weights and measurements or legally forcing people to take last names. But the process always requires a simulation, necessarily oversimplified, of society and man. Scott writes:

One of the major purposes of state simplifications, collectivization, assembly lines, plantations, and planned communities alike is to strip down reality to the bare bones so that the rules will in fact explain more of the situation and provide a better guide to behavior. To the extent that this simplification can be imposed, those who make the rules can actually supply crucial guidance and instruction. This, at any rate, is what I take to be the inner logic of social, economic, and productive de-skilling. If the environment can be simplified down to the point where the rules do explain a great deal, those who formulate the rules and techniques have also greatly expanded their power. They have, correspondingly, diminished the power of those who do not.

Read in this context, Turkle’s book becomes background material for understanding the methods by which society is organized and controlled. If we want to understand why the architecture of our cities are designed a specific way, or why our doctors explain our health to us in a certain language, we also need to understand the simulations used to extrapolate reality from Reality. We need to understand how our own lives are simplified and modeled if we want to understand the ways in which our experiences are being shaped.

We also need to be aware of the essential ways that simulations give a sense of overconfidence in technology. Leaving the temporal scope of the book and moving more freely through time, going back at least to Turing and the creation of the first computer—we find the first simulation of human thought performed by a discrete-state machine. The brain, of course, is not a discrete-state machine. But it does simulate one (though not as efficiently) with startling results. So what a computer actually does is simulate an organic entity which is itself in the act of simulating. The scholar Roberto Calasso tells us that this mistaking of the brain for its act of simulating is what’s known as the closed vessel problem.

The closed vessel problem is conceptually simple. Every model of reality, whether it be a computer program, architectural plan, or map, is necessarily imperfect. By virtue of existing within reality itself, the model can never capture the full heft of the real. A model of reality expresses, in a simplified way, the world outside of itself, while reality contains the model as well. No matter how accurate a rendering of, say, the human body, in being constrained by its materials, some part of the real thing will always remain elusive. Reality is too rich to allow itself to be perfectly captured in any rendering, model, or experiment. Remarking on it in her notes, Simone Weil wrote, “Essential contradiction in our conception of science: the fiction of the closed vessel (foundation of every experimental science) is contrary to the scientific conception of the world. Two experiments should never give identical results. We overcome it through the notion of the negligible. But the negligible is the world…” In other words, going back to Francis Bacon, power is a sort of knowledge, but it’s purchased at a cost: the rejection of the full variety of life itself.

Turkle’s book is admirable in its clarity and focus. In refusing to entangle itself with the larger philosophical issues of simulation, it avoids becoming lost in the high weeds of phenomenology or the prospect of becoming a rambling and diffuse political tract. It remains about one specific thing, the experiences of a handful of students and professors at MIT as they implement simulation. And it’s not about anything else. We often learn more from these books for their simplicity. In writing about certain professor’s sense of “sacred” practices, such as drawing by hand or making physical models of molecules, Turkle writes:

But even if this notion of sacred space now seems quaint, what remains timely is finding ways to work with simulation yet be accountable to nature. This is a complex undertaking: as we put ever-greater value on what we do and make in simulation, we are left with the task of revaluing the real.

It’s a bit ambiguous who this “we” Turkle refers to is supposed to be. Perhaps the most illuminating fact to emerge from the book’s faith in its own subject is the real chasm which separates the practitioners of scientific simulation from the other citizens left with no choice but to find their way in a world entirely constructed by the technocrats of Scott’s “high modernism.” They create reality while we’re left to interpret and, hopefully, survive it. And as President George W. Bush’s aide famously told reporter Ron Suskind, while we’re “studying that reality,” the protagonists of Simulation and Its Discontents will be busy building a new one.