September 11’s Long Shadow

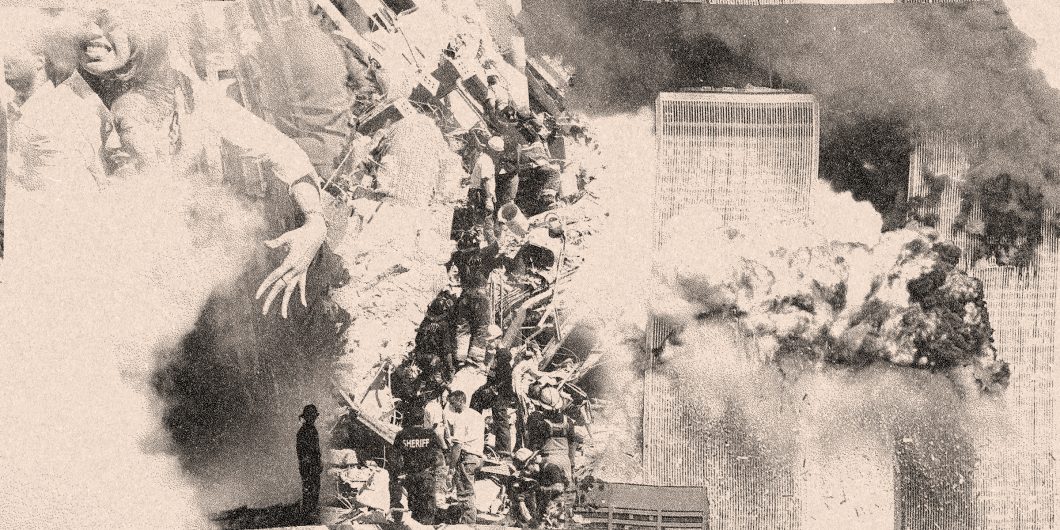

After the Japanese attack on Pearl Harbor, Franklin Delano Roosevelt famously called December 7, 1941 “a day that will live in infamy.” The same words describe the terrorist strike against the United States on September 11, 2001. Events that day captured global attention through live media coverage as shocked viewers struggled to make sense of what they saw. Terrorists hijacked four airliners fueled to capacity and used them as flying incendiary bombs. Crashing two into the World Trade Center inflicted massive casualties and paralyzed New York along with the financial system based there. A third plane hit the Pentagon near Washington while passengers only thwarted plans to destroy the US Capitol by seizing control of the fourth and bringing it down in rural Pennsylvania. Uncertainty heightened tension as the scale of destruction and lost lives became apparent. The word shock hardly captured the feeling at the close of that long day.

Looking back on 9/11 twenty years later underlines what a turning point it was. The date became shorthand for terrorist attacks that shattered the optimism of a post-Cold War era where history had supposedly ended. A new protracted conflict with radical Islamic extremism seemed to have begun over American skies. Responding to terrorism dominated the next decade, but the responses created their own problems. Much has been said about what George W. Bush and his administration did after 9/11, but other issues they and the American leadership class neglected as a result mattered just as much. The consequences only later came into view.

9/11 marked a tragedy in more ways than one. A destructive event that killed 3,000 people and caused great distress, it sparked rightful outrage that did more harm than the attacks themselves. How Americans, especially those at the highest levels of government, experienced the day helps explain their response. Shock and uncertainty made what Michael Mandelbaum rightly described later as “a horrible, but isolated moment in American history” the catalyst for steps that leaders would not have otherwise taken nor the public supported. They also made it a defining moment.

The Dominion of Risk

Those with conscious memories of 9/11 remember where they were as they heard the news much as earlier generations that lived through Pearl Harbor or John F. Kennedy’s assassination. But people experienced the day very differently. Early reports of an aircraft colliding with one of the twin towers were quickly replaced with gripping live coverage of an unfolding catastrophe beyond the scale of earlier terrorist attacks. With both towers burning in Manhattan and first responders mobilizing to help, a third flight hit the Pentagon. US airspace closed by mid-morning. President Bush left a scheduled event in Florida only for Air Force One to be diverted.

With the White House, Capitol, and other buildings under threat, officials and staff took shelter even as many kept busy responding to events. Dust shrouded New York when the towers collapsed, killing emergency workers and people trying to evacuate. Business districts in cities across the country shut early. Their streets resembled a ghost town. Nobody knew quite what would happen next. Recovery from the largest terrorist attacks in American history dominated news coverage over the coming weeks. Nightly television reports from Ground Zero at the World Trade Center kept the devastation at the forefront of attention for weeks to come.

How Americans experienced 9/11 and its aftermath explains the response. The shock exposed not only the country’s vulnerability, but also intelligence and security failures. Despite warnings, the Clinton administration had made only limited attacks on terrorist bases, while the incoming Bush administration set a low priority on terrorism as its security and foreign policy team moved into place. Terrorism largely happened overseas and typically could be managed or even ignored. Even mass casualty bombings of US embassies in Africa and a more recent strike against the destroyer USS Cole in Aden gained little traction with the American public. 9/11 was very different. Terrorism went from something over there to an immediate threat right here to be met by any means necessary. Failure to prevent the attacks or take the danger seriously made officials resolve not to make that mistake again. Indeed, they overcompensated.

Ironically, Al Qaeda’s success removed the conditions that made it possible. Their plot relied on catching victims unawares. Airline hijackings since the 1970s followed a familiar script where passengers, crew, and airlines understood that cooperation meant survival, but turning planes into flying bombs made resistance the only choice. Passengers fighting back on their own initiative brought down Flight 93, probably saving the US Capitol. The script changed for good, and a whole new set of precautions hardened planes as a target. Caution took hold. No longer would employees, security, or the public brush aside doubts about suspicious characters as prejudice the way some had done right before 9/11. If that meant profiling, nobody cared. Beyond the skies, vigilance made it harder for terrorists to plan or act without exposure. Even before governments tightened security measures and the US spent more than $1 trillion over the next decade their chance had passed. Bin Laden and his henchmen could only be lucky once.

Americans nonetheless felt what the political scientist John Mueller aptly called “a false sense of insecurity.” Media coverage amplified the public’s sense of risk. Richard Clarke, the chief counter-terrorism official on the National Security Council who had warned ineffectually against al Qaeda before 9/11, sketched a lurid counterfactual in 2005 after leaving government of another terrorist wave against soft targets that spread fear, mobilized the country behind a domestic security campaign, and infringed civil liberties. His account of what did not happen overstated vulnerabilities, but it captured very real anxieties that shaped policy and the public mood. Who could question the need for sweeping change? Leading Democrats joined with a Republican administration in the name of security. Warnings about dangers to civil liberties were dismissed, at least until what changes meant in practice became apparent. In the meantime, worst case scenarios set the tone.

Reforms established a new Department of Homeland Security bringing relevant agencies, except the FBI and CIA, into a single administrative structure with more funding and personnel. The experience of flying changed entirely. Electronic surveillance increased with much wider collection of private communication and less concern for privacy. Authorities had broad latitude for treating “unlawful combatants” captured overseas or using lethal force against suspected terrorists outside the domestic legal process. Drone strikes subjected American citizens and foreign nationals to targeted killings. Minimizing both risk to personnel and media attention made these remote strikes an attractive option for countering terrorism. These steps abroad set precedents law enforcement might apply later at home. Only gradually did concerns about expense or civil liberties, not to mention growing complaints about “security theater” involving measures which served no purpose beyond testing public compliance, strike a dissonant note. A well-funded private sector counterterrorism industry—often drawing on grants from state and local government—had a stake in keeping concern high. Calculating risk differently against the prevailing expert consensus helped neither careers nor reputations.

Confusions of the Global War on Terror

Fighting terrorism went beyond defensive efforts as 9/11 removed constraints on vigorous military action overseas. The Bush administration determined with broad support, including Congressional Democratic leaders, to drain the swamp in which terrorists like al Qaeda had found refuge. Bringing bin Laden and other perpetrators to justice made Afghanistan an immediate target. The campaign to overthrow the Taliban regime that had sheltered Al Qaeda used airpower and special forces to support local allies in a combined operation whose quick success raised confidence in what American military power could do. Bin Laden’s escape mattered less than driving out the Taliban and disrupting terrorist operations.

At first, it seemed that Afghanistan marked an easy victory in what American strategists viewed as a new protracted conflict along the lines of the long, twilight struggle of the Cold War. Denying terrorists a refuge there had been leftover business from the 1990s. Iraq and overthrowing Saddam Hussein’s Baathist regime quickly became the next item on that “to do” list. A search for options empowered those with plans on the shelf. Deputy Defense Secretary Paul Wolfowitz pushed early for military action against Saddam. Plans for aggressive action to extend US influence now had a compelling argument as did a larger effort to organize security policy around stabilizing a now endangered global commons.

The Cold War focus on superpower rivalry and the optimism fueled by its triumphal close overshadowed problems involving the fragility of political order in much of the world. Those longstanding challenges became more apparent in the context of 9/11. An emphasis on economic development in newly independent colonies during the 1960s missed the need for governments there to effectively govern. Weak civil society and even weaker institutions brought unrest often compounded by outside intervention and ideological rivalry among foreign powers. Failed states, as Robert Kaplan chronicled in Africa and elsewhere, became a growing problem. Many despotic governments, like Iraq’s, were more brittle than the despotism they exercised indicated. Without harsh rulers to hold them together states became little more than lines on a map. Sometimes even the harshest regimes could not or would not keep terrorists and organized crime from using their countries as an operating base.

Failure to understand the cultural and social environment behind these problems impeded efforts to manage or even diagnose them effectively. American elites familiar with Western and Central Europe faced different challenges of great power rivalry there successfully in the 20th century because they knew those societies well. They had also a grasp of dynamics in East Asia and the Caribbean from long experience. Other regions presented a very different story where most Americans who made and implemented policy did not understand local conditions and factors shaping them which meant they could not judge circumstances or frame policies to meet them effectively. Relying instead on social science theory and misleading analogies led them astray.

Humanitarian intervention during the 1990s responded to the problem of failed states, but lacked domestic support in the US and other countries. Indeed, Bush campaigned in 2000 against “nation building.” Professional military officers feared repeating the experience of Vietnam and saw operations other than war as a distraction from their core mission. The Weinberger Doctrine—later renamed after General Colin Powell—sought to avoid quagmires by requiring domestic support, clear goals, and a high prospect of success for military action. It certainly ruled out policing operations that echoed colonial wars of the past. Success during the 1991 Gulf War had banished the Vietnam Syndrome by deploying weapons, doctrine, and tactics developed to overcome Soviet numerical superiority in Central Europe against a weaker opponent in a more favorable desert environment. Civilians in the Clinton administration then sought to use military capabilities for a wider range of operations that set precedents even as they drew opposition.

Discussion over the merits of intervention ended on 9/11. Globalization had brought conflicts from the periphery into the heart of the developed world. The historian Edward Gibbon had claimed in the 1770s that distance and technological superiority prevented barbarism from overwhelming civilization as had defeated the Western Roman Empire, but neither seemed to ensure safety in 2001. American leaders now found themselves embracing the idea that barbarians increasingly had to be tamed abroad or faced at home. Influential commentators took empire as a model for securing the global commons and reconstructing the greater Middle East and their arguments offered support for a new, more ambitious strategy. A bipartisan consensus in Washington with broad public support authorized military force not only against terrorists and in Afghanistan, but also to overthrow Saddam in Iraq.

Salafist Islam took communism’s place as an ideological foe, although Bush took pains to avoid framing the war on terror as a religious war and spoke of Islam as a religion of peace. Comparisons with Stalinism and the term “Islamofascism” along with a commitment to promoting democratization show the appeal of ideological struggle as a motivating force or organizing intellectual principle. 9/11 pushed to the forefront Samuel Huntington’s controversial argument that cultural or civilizational divides would be the primary fault line for international politics. His model aimed to explain rising tensions with China and other challenges, but the observation that Islam has bloody borders resonated given recent conflicts. Policymakers determined that instead of raising barriers to exclude Moslems, shocking the Middle East into democratic reform would change the toxic culture that fostered Islamic terrorism. Military defeats showing the weakness of despotic regimes would make a space for transformative change. They would also demonstrate the price for aiding terrorism.

Iraq, like Afghanistan, indicated the limits of American and allied military power. Conventional operations proved relatively easy. Technology and the operational skill of commanders and their troops made conquest a quick matter, but the next steps faltered. General David Petraeus anticipated the problem beforehand with the rhetorical question “tell me how this ends?” Allied forces had neither manpower nor effective plans to maintain order and keep essential services running after Saddam’s regime entirely collapsed, leaving a vacuum. Warfighting replaced effective strategy balancing the aims set by policy against the available means, but operational skill could not turn battlefield success into political outcomes. Indeed, observers like the historian Hew Strachan questioned whether Anglo-American militaries had lost their grasp on strategy as a principle to guide both action and deliberations with civilian superiors.

We were drawn too deeply into open ended commitments with an ill-defined war on terrorism and failed to recognize 9/11 as a uniquely horrible episode rather than the start of a new struggle.

A template for reconstructing Iraq mistakenly borrowed from post-World War II Germany and Japan failed in Iraq. Those occupations involved countries with civil societies, recent experience of democratic governance and rule of law, and functional state institutions. Germans and Japanese accepted defeat and cooperated with occupying authorities, while Iraq reverted to tribalism and a Hobbesian struggle between religious and ethnic groups. The US lacked active and effective local military support there or in Afghanistan. Mission creep in the latter squandered early success and turned nation building into a counterinsurgency that became America’s longest war. Lack of an effective strategy made the whole effort what a later Pentagon audit likened to a self-licking ice cream cone. If firepower alone could work the earlier Soviet occupation would have succeeded, rather than ending in humiliating defeat. Mismanaged US development aid distorted Afghanistan’s economy with uncontrolled corruption. Hopes for transforming these regions curdled into disillusionment as American casualties grew. Both episodes showed that power and reality nearly always confound visions and ideology. Iraq also had steep diplomatic costs that eroded the international goodwill and support after 9/11. The elections of Barak Obama and Donald Trump repudiated the whole project along with the bipartisan leadership behind it.

Things Left Neglected

Like Aaron’s rod and the serpents in the Old Testament, the conflicts spawned by 9/11 swallowed all other business and concerns. China offers a telling example. A collision between an American surveillance plane and a Chinese fighter in April 2000 brought a tense diplomatic confrontation when the former made a forced landing on Hainan Island. The crew’s release came only after an expression of regret from Washington over an infringement on Chinese airspace. Thomas Friedman warned at the time about the risk Beijing faced of an American backlash against such treatment. US policy had favored China over the past decade by opening access to the global market. The Clinton administration had criticized human rights abuses and deployed ships in the Taiwan Strait as support for the island country’s resistance to coercive diplomacy from the mainland but did nothing to check the transfer of both technology and manufacturing to China.

Chinese ambitions backed by recent economic growth had become a concern by the late 1990s. Would reliance on foreign trade and integration with the global order liberalize China or build it into a peer competitor of the US? Opinion on the question differed among American policy elites, but the war on terror and its focus on the Middle East took attention from the debate. China’s accession to the World Trade Organization in December 2001 pushed the economic door open further in a continuation of longstanding policy. It seems likely that nationalist-minded figures who emphasized hard power like Donald Rumsfeld and Dick Cheney would have pushed a firmer line had they not been focused elsewhere. A naval and air strategy to deter China would have been a higher and earlier priority. The US military’s force structure would have served that task instead of being reconfigured for irregular ground operations in the Middle East at the conventional navy’s and air force’s expense. Only quite recently did the need to reverse those priorities become accepted.

Accommodating China had important domestic consequences for the US. Prosperity during the 1990s hid from view the impact globalization had on many regions where deindustrialization took hold. Earlier competition with Japan had raised alarms over American competitiveness with some like the historian Paul Kennedy drawing parallels with Britain’s decline, but those arguments seemed dated in the late 1980s. Many Japanese and German companies shifted production to American factories and Japan faced its own difficulties with plateauing growth. But outsourcing manufacturing still devastated regions, while the post-Cold War peace dividend cut defense spending with consequences for aerospace and other sectors in California. Moving the production of furniture and other goods to China left communities without employment. Those with options took them and left for a better life elsewhere. Others adapted to an environment where productive work seemed a thing of the past.

Rust belt decline involved more than a local tragedy. Tony Judt deplored how structural unemployment devastated European industrial regions during the 1990s. Welfare prevented dire poverty but removed a sense of purpose from working class communities. French areas that had long voted socialist or communist also came to reliably back the National Front. Christophe Guilluy showed how globalization transformed the social geography of France from 2000, with hollowed out regions and prosperous metropolitan ones drawing apart in mutual hostility that has parallels in the US and elsewhere. Parties of the left on both sides of the Atlantic had abandoned workers and their economic concerns for social issues.

Opioid and methamphetamine addiction plagued America’s declining communities with a widening gap between those who benefited from the new order globalization had brought and others stuck with the consequences of decline. It also bears noting that the military tasked with fighting the global war on terror recruited heavily from those declining regions along with rural areas rather than affluent metropolitan ones. Failure abroad reinforced alienation over conditions at home. Huntington’s protégé James Kurth made a revealing point in the mid-1990s that the real clash lies within civilizations rather than between them. Conflicts he anticipated between progressive elites and a more traditionalist public came to the fore during the 2010s with the impact of other developments.

A spike in energy prices brought by the Iraq War became a catalyst for the 2008 financial crisis when already strained family budgets struggled to meet higher costs for essential items. Other factors had created a real estate bubble in both Europe and the United States that bank mergers and regulatory policy made a systemic risk. The great recession brought by the crash devastated public confidence. If 9/11 brought people together in a shared moment of patriotic unity, the economic crisis damaged public trust in both leaders and institutions. It marked a failure that coincided with disappointments abroad even as terrorism became less and less a concern at home. A slow and weak recovery that left deprived regions further behind amplified the effect of the crash while polarizing opinion. Even when few spoke openly about it, Americans noticed that nobody faced accountability for failures either at home or abroad. Gallup polls show that since 2011 between 15% and 20% of Americans trust the federal government to do the right thing. Ironically, confidence that had peaked in 1964 at 77% rose again to a brief high of 54% right after 9/11 before falling again.

Paths Not Taken

What other choices might have been made? Crisis offers the possibility of what the 19th-century philosopher William James called “the moral equivalent of war” to mobilize society behind a disciplined joint effort with shared sacrifice. America’s efforts during World War II, particularly on the home front, come to mind with higher taxation, required military or civilian service, rationing goods, and similar measures. Some voices advocated a few or all these things, but even at the time, those ideas looked like solutions in search of the problem. James himself recognized war’s exceptional nature and his proposal reflected the difficulty of creating those conditions under very different peacetime circumstances. Enthusiasm fades, along with unity of purpose. And turning society upside down lets terrorists win. Bush drew criticism for urging Americans to fly and visit the country’s great destinations, but his words captured the deeper reality that terrorism succeeds only when allowed to change how people live and the policies governments pursue.

It is hard to see how 9/11 could not have dominated American life at least for a time. Mitigating terrorism’s effects and enhancing resilience against them rightly became a priority after 9/11. Aggressive efforts to disrupt networks and foil their operations, along with bringing those responsible to justice were both right and inevitable. They could have been pursued unilaterally where necessary, but also through multilateral frameworks to sustain international support as far as possible. Shared interests against terrorism made that kind of cooperation possible. It also justified punitive military campaigns against state-sponsors of terror and safe havens.

We were drawn too deeply into open ended commitments with an ill-defined war on terrorism and failed to recognize 9/11 as a uniquely horrible episode rather than the start of a new struggle. Like the Cold War, that conflict justified measures that soon proved imprudent or unpopular. Electronic surveillance, rendition of suspects to countries allowing enhanced interrogation, and direct attacks on terrorists abroad set precedents some might apply in a very different context at home. The war on terror also turned attention from other concerns that returned to prominence over the next decade with disruptive consequences. We now live in the world those decisions made. And made very much for the worse.

Author’s Postscript: This piece was written and circulated to respondents before the events of last month in Afghanistan.