Sometimes artificial intelligence can be a useful tool, but it can also alienate us from real goods.

Artificial Unintelligence

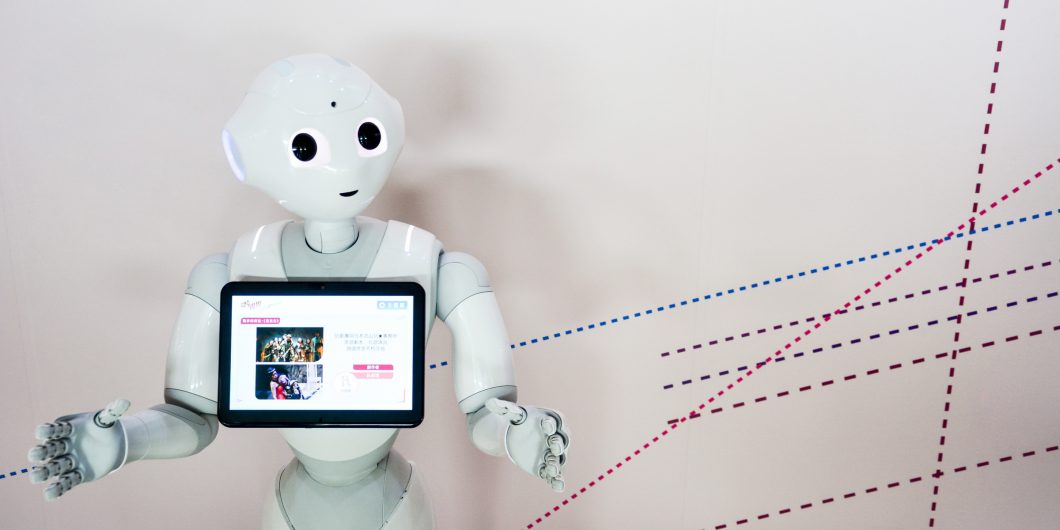

Even though he is only four years old, Pepper knows 15 languages. He can tell you where he is from (Paris) and how tall he is (120 cm.) All of this is impressive until you ask him, “Do you have any feelings?” Pepper’s response to that is, “I don’t understand. How about a taco?” SoftBank’s Pepper is a humanoid robot, which is arguably the most impressive exemplar of an android in existence, but alas production of him has just been paused since no one wants to purchase what essentially is a wheeled incarnation of Alexa or Siri. Pepper is the current reality of artificial intelligence, which is nowhere near the myth of artificial intelligence, viz. a machine that exceeds humanity in all meaningful respects.

René Descartes recognized at least two problems that Pepper would face. In Descartes Discourse V (1637), he proffered a thought experiment where there is a machina that functions like a human body. Descartes argues that we would be able to determine that the machine is not human. He identified two ways this would come about: First, the machine would fail linguistically (we might call this the linguistic problem). For example, it would not be able to speak grammar correctly, use jargon appropriately, and understand irony. While the machine would be able to perform certain tasks better than humans, say computation, it would fail at others such as offering condolences on the passing of a family member (this we term the specificity problem). Pepper clearly fails both these tests—and illustrates the barriers that stand before those who would create humanlike AI.

Cogito Ergo Fabulae

In Alan Turing’s seminal paper “Computing Machinery and Intelligence” (1950) he considered the question, “Can machines think?” To answer this query, Turing proposed the Imitation Game, now known as the Turing Test. He was influenced by Descartes’ ruminations on a humanoid automaton as well as the advent of Game Theory as a mathematical discipline in the 1930s and 1940s. The Turing Test is a game with three players, where the interrogator is in a different room from a human and a machine. The interrogator asks the human and the machine questions, and they respond (via typed response.) Based on their responses, the interrogator should be able to correctly identify who is the man and who is the machine. If the machine can imitate a human, i.e. fool the interrogator, then it has passed the Turing Test.

Descartes two criteria are certainly necessary for a machine to pass the Turing Test, but John Searle thinks these are insufficient and argues that even if a machine passes the Turing Test, this does not guarantee that the machine has consciousness of the sort that is essential to human cognition.

Consider Searle’s four-decade old AI test—the Chinese Room Argument—which runs as follows: Suppose that John (an English speaker) is in a room and he does not understand Chinese, yet he has English rules for manipulating Chinese logograms. John becomes adept enough at employing the Hanzi that when they are given to Chinese speakers outside the room, they conclude that whoever wrote them understands Chinese. Searle’s Chinese Room Argument points to two additional difficulties for the possibility of non-human AI. The completeness problem is that syntax is not semantics. In other words, while you can correctly transcribe 水 in context via mimicry, it does not follow that you know that this symbol represents “water.” The replicant problem is that simulation is not duplication. For example, playing the videogame The Hunter: Call of the Wild within which one can hunt boar (simulation) is not the same as hunting a boar (duplication.) As for Pepper, we’ve noted that he has already failed the Turing Test due to Descartes’ two criteria. He does not escape the Chinese Room either since he does not understand the symbols that show up on his external screen and he is merely a poor simulacrum of human thought and action.

Strong AI and Narrow AI are distinct. Strong AI, or Artificial General Intelligence (AGI), is an AI that gives solutions to the four problems Descartes and Searle pose. We may be mediocre chess players, but we can drive in stop-and-go traffic without much thought. We may have trouble computing the tip at a brewery in our heads, but without a conscious thought we speak differently to our pastor, our boss, our children, and our childhood friends. Humans truly understand context, abstraction, and logic. Narrow (or Weak) Artificial Intelligence is generally defined in contrast to Strong AI and is any artificial intelligence that needs directed human intervention to solve problems (e.g.. an AI that that is trained by humans to play Jeopardy!, yet cannot play another game like Wheel of Fortune); for the remainder of this review, we consider only strong AI or AGI.

In The Myth of Artificial Intelligence, computer scientist and tech entrepreneur Erik J. Larson argues that computers simply cannot think the way we do. So far, computers have not even come close to passing the Turing Test, or even weaker versions of it. Larson states in the opening paragraph, that the “myth of artificial intelligence is that its arrival is inevitable.” While Larson does not dispute that true AI is possible, he argues that it is not inevitable. There is a duo of intertwined strands that form the AI myth according to Larson: culture and science. There is a third strand which Larson correctly delves deeply into, viz. philosophy, but inexplicably he does not consider this to be a separate filament even though, philosophy, and particularly, the thought of Charles Sander Peirce plays a pivotal role in his argument against the myth of AI.

Cogito Ergo Machina

The notion of AI is part of the cultural fabric. There are countless stories, movies, and television shows that describe AI as not only having the ability to obtain human-level intelligence, but a superintelligence that far surpasses humans. In Philp K. Dick’s Do Androids Dream of Electric Sheep? as well as in Ridley Scott’s motion picture Blade Runner, which was based on Dick’s novel, the Blade Runner is trained to perform a Turing-esque Test on humanoid androids called Replicants. Both the novel and the movie make us ponder the meaning of being human. Alex Garland’s dramatic film Ex Machina, which also has another variation of Turing’s Imitation Game, has an AI character named Eva and like her Biblical namesake, Eva must decide if sin is worth the cost of leaving the Garden of Eden.

Larson illustrates that any AI that does not live up to these cultural expectations is nothing other than a fraud. While futurists like Ray Kurzweil state that AI is inevitable, they offer no justification other than purported scientific laws like Kurzweil’s own Law of Accelerated Returns (LOAR): technological advancement proceeds exponentially. Therefore, says Kurzweil, it is inevitable that there will be immortal software-based humans who will have intelligence that supersedes human’s ability to think. Larson does not dispute that Kurzweil’s conclusion is possible, but he correctly discounts Kurzweil’s bogus premise.

To demonstrate that LOAR is no actual law, Larson gives several refutations against the inevitability of AI. In particular, he argues against the buzzworthy examples of computers’ success with video games, chess, language translation, and Jeopardy! Regardless of the artificial intelligence application, the purported AI fails for the same reasons. Let’s consider Jeopardy! as the exemplar. While IBM did create a computer that could succeed at playing Jeopardy! in the sense that it could defeat human competitors, it can do nothing else.

- Specificity. Could the computer play another game show like Wheel of Fortune?

- Linguistic. Could it give sympathy regarding the passing of Alex Trebek?

- Completeness. Does the computer understand what “what is” means when it responds, “What is [blank],” to an answer?

- Replicant. When the computer places its wager for the “Final Jeopardy!” round is it nervous about losing?

The answer to these four queries remains a firm “no.” If a computer “mastering” Jeopardy! is the best that AI has, then we are a long way from worrying about Skynet from The Terminator.

Cogito Ergo Sum

While the specter of Turing haunts the pages of this book, it is also true that the presence of Charles Sanders Peirce radiates throughout. While Peirce is one of the greatest American philosophers for both the breadth and originality of his work, his thought is still largely unknown. Happily, Larson helps to remedy this unfortunate fact with his dependence on Peirce. Most importantly for Larson is Peirce’s separation of logic into three distinct branches: deduction, induction, and abduction. While AI excels at deductive logic and can be competent at inductive logic, it is a complete failure at abductive logic. Humans, of course, can use all three forms of logic and seamlessly so. In syllogistic form, here are the three types of Peircean inferences.

- Deduction. All the beans from this bag are white. These beans are from this bag. Ergo, these beans are white.

- Induction. These beans are from this bag. These beans are white. Ergo, all the beans from this bag are white.

- Abduction. All the beans from this bag are white. These beans are white. Ergo, these beans are from this bag.

The abduction example is the logical fallacy affirming the consequent. While humans generally know when we can and cannot make such an inference, computers are tied to deduction and cannot deal with a violation of modes ponens. Larson concludes from this that until we have a theory of abduction akin to what we have for induction and deduction, AI is impossible. In addition to having the trio of inferential theories, we also need a metatheory that tells us when to use each type of inference. Thus, we can add a fifth problem for AI: inference.

Even if a computer could produce a love story like Romeo and Juliet, we have no reason to believe it would comprehend the pathos of what it created. Further, what of the notion of being a parent? Consciousness allows us to know that the love we feel for our children transcends logical explanation, and thus storge is not any more programmable than eros.

If an AI can solve all five of these problems, then let’s say it has consciousness. The philosophical view that the brain is a computer, and the mind is a computer program is called functionalism. It is peculiar that Larson, who adeptly describes challenging philosophical ideas, does not mention this term nor the related notion of dualism. Functionalism is a type of materialism that rejects the distinction between mind (consciousness) and brain. The functionalist would argue, for example, that pain is not a qualitative and subjective experience, but rather pain is a physical state that is part of the functional patterns of the human brain. Thus, the functionalist denies the irreducibility of consciousness and therefore cognition is completely materialist and so it is equivalent to AI.

Cogito Ergo Anima

Is consciousness reducible? Larson does not offer a definitive answer to this question, but he is skeptical of the purported evidence that says it is. How can a machine appreciate the act of sex? While Larson mentions this only in passing, love and intimacy are fundamental to what makes the conscious experience human. Even if a computer could produce a love story like Romeo and Juliet, we have no reason to believe it would comprehend the pathos of what it created. Further, what of the notion of being a parent? Consciousness allows us to know that the love we feel for our children transcends logical explanation, and thus storge is not any more programmable than eros.

In reference to the work of Michael Polanyi, Larson notes there is a difference between reading the recipe and cooking from the recipe. Polanyi used this to discount the possibility of AI since while it can do the former, it cannot ever do the latter since there are nuances when one does something that can never be understood by a mere description, which is what programming languages are limited to. AI is also brittle. A machine learning algorithm, even with access to “Big Data” via the web and the cloud, can easily break. An AI that has been trained to play a video game successfully will fail with the slightest change, such as modifying the pixels within the gameplay.

And what of self-determination? While it is something that Larson alludes to in his discussion of how computers are programmed even if the machine learning algorithms are “unsupervised,” it is not one that Larson makes explicit. The existential choice of choosing to carry-on in the face of Kierkegaardian-Heideggerian anxiety is not something that is programable. Atheist Douglas Adams’ understood that an AI must experience existential angst to be truly conscious. The character Marvin the Paranoid Android in Adams’ satirical The Hitchhiker’s Guide to the Galaxy series is depressed and suicidal since he is aware of how much more intelligent he is than the humans he begrudgingly serves. We live in a secular world, but Larson does not even mention terms like “soul.” If religious notions are anathema to him, they are still a fundamental part of the human experience and need to be mentioned at least in passing.

Even with what it neglects, Larson’s book is excellent, and tells the story of how successful narrow AI has been in comparison to the failures of strong AI. It also shows us why we have no reason to believe that these failures will turn into successes anytime soon. The Myth of Artificial Intelligence also serves as a warning to be skeptical of the predictions of experts and expresses the importance of having a sound theory to properly practice science.